What we learned building a remote MCP server

10 min read

—

We spent months building local MCP integrations that worked well – if you could get past the setup. Node.js, npm, config files, and environment variables. When the protocol added remote server support, we rebuilt everything behind a single URL. What came back taught us more about LLM tool design than anything we'd built before.

Ahmed Bashir and Luka Košenina walked through this reality on a October 2025 DevRev LinkedIn Live: a strong remote MCP experience does not fall out of the box – it takes deliberate tool design, iteration, and often extra annotation or narrower tools when a query shape is ambiguous.

This post is about what worked, what broke, and why the difference matters.

From local to remote

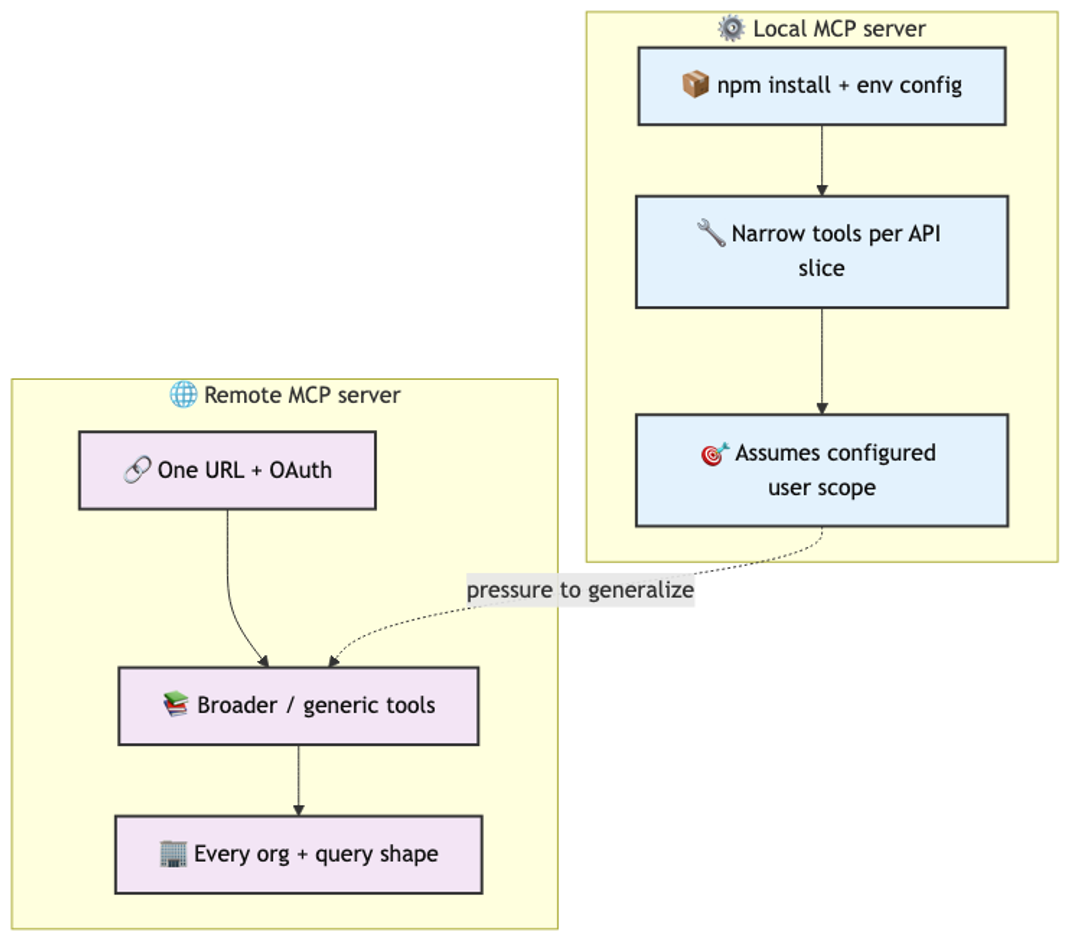

Our local MCP server shipped as an npm package. Developers installed it, configured credentials, and connected it to Claude Desktop or Cursor. It worked well because we could be precise: each tool mapped to a narrow slice of DevRev's API. A tool for listing issues. A tool for searching articles. A tool for sprint queries. Each one small, focused, and easy for the LLM to reason about.

Remote MCP changes the contract. There is no install step. You give the client a URL, authenticate via OAuth, and you are connected. The friction drops to nearly zero.

But we had to redesign the tools. Local tools could assume a specific user's configuration and scope. Remote tools need to serve every user in every org with every possible query shape. That pressure toward generality is where things started to break.

What works well

Simple, intent-clear queries work beautifully on remote MCP. "What issues should I work on today?" routes cleanly to a filtered search scoped to the authenticated user, sorted by priority and due date. The LLM picks the right tool, fills the parameters correctly, and returns a useful result.

Sprint-filtered queries also perform well. "Show me everything in the current sprint" or "what's overdue in sprint 47" give the LLM enough structure to construct a valid request. The tool schema has a sprint parameter, the LLM fills it, and the response comes back fast.

Overdue task detection is another strong case. The concept of "overdue" maps to a simple date comparison, and the LLM handles temporal reasoning well enough to construct the right filter. These queries share a pattern: they map to a single tool call with two or three parameters filled from obvious context.

What breaks

We ran the same query against both local and remote MCP: "give me a summary of all issues updated in the last day and that are relevant to the MCP sprint 9." On local, it returned the correct result. On remote, it failed – not with an error, but with a wrong answer. The LLM confidently returned data that did not match the query.

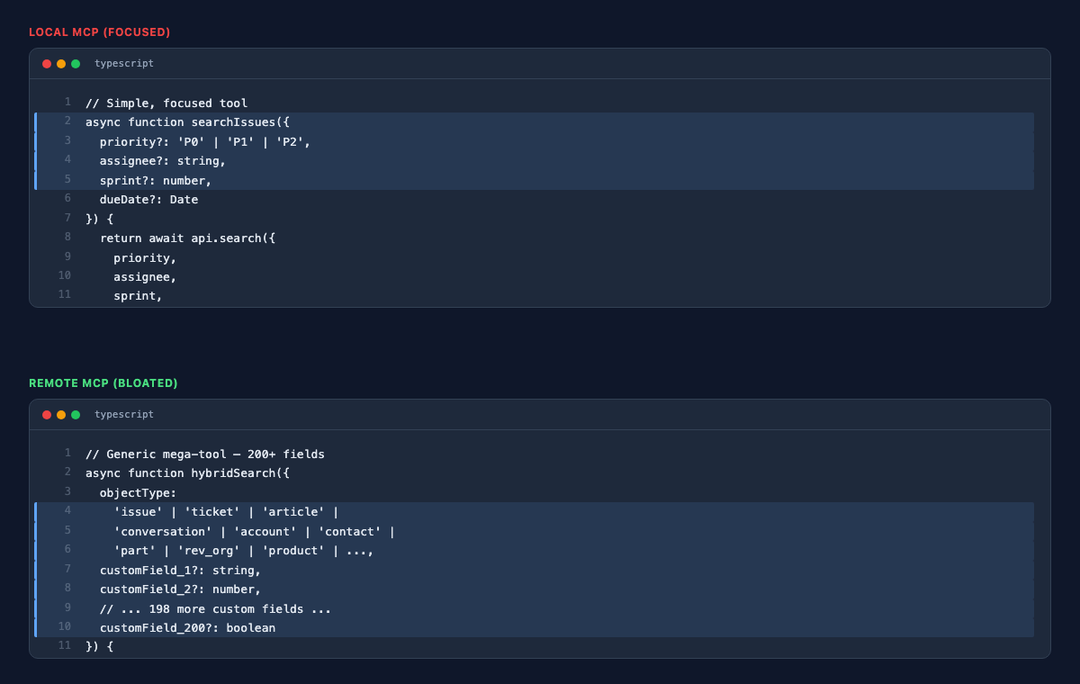

The root cause was tool schema complexity. Our remote server's hybrid search tool spans roughly twenty object types. Issues, tickets, articles, conversations, accounts, contacts, parts, rev orgs – each with their own filterable fields, enums, and relationships. The schema for a single tool ballooned into hundreds of lines.

When the LLM receives that schema, it has to parse the full structure before it can reason about which parameters to fill. With twenty object types multiplied by their respective fields, the model has to hold the entire taxonomy in its working context just to construct one query. It gets confused. It fills the wrong enum value. It omits a required filter. It hallucinates a field name that sounds right but does not exist.

That live session was titled around the same idea: nothing here "just works" on day one without validation. Complex aggregation queries – "how many P0 bugs were filed last quarter grouped by component" – require the LLM to compose multiple filters, select the right object type, and understand our data model's grouping semantics. The mega-tool schema makes each of those steps a place where the model can silently fail.

Why tool design is everything

The core lesson is blunt: tool design matters more than any other part of your MCP implementation. More than auth. More than transport. More than the server framework you choose.

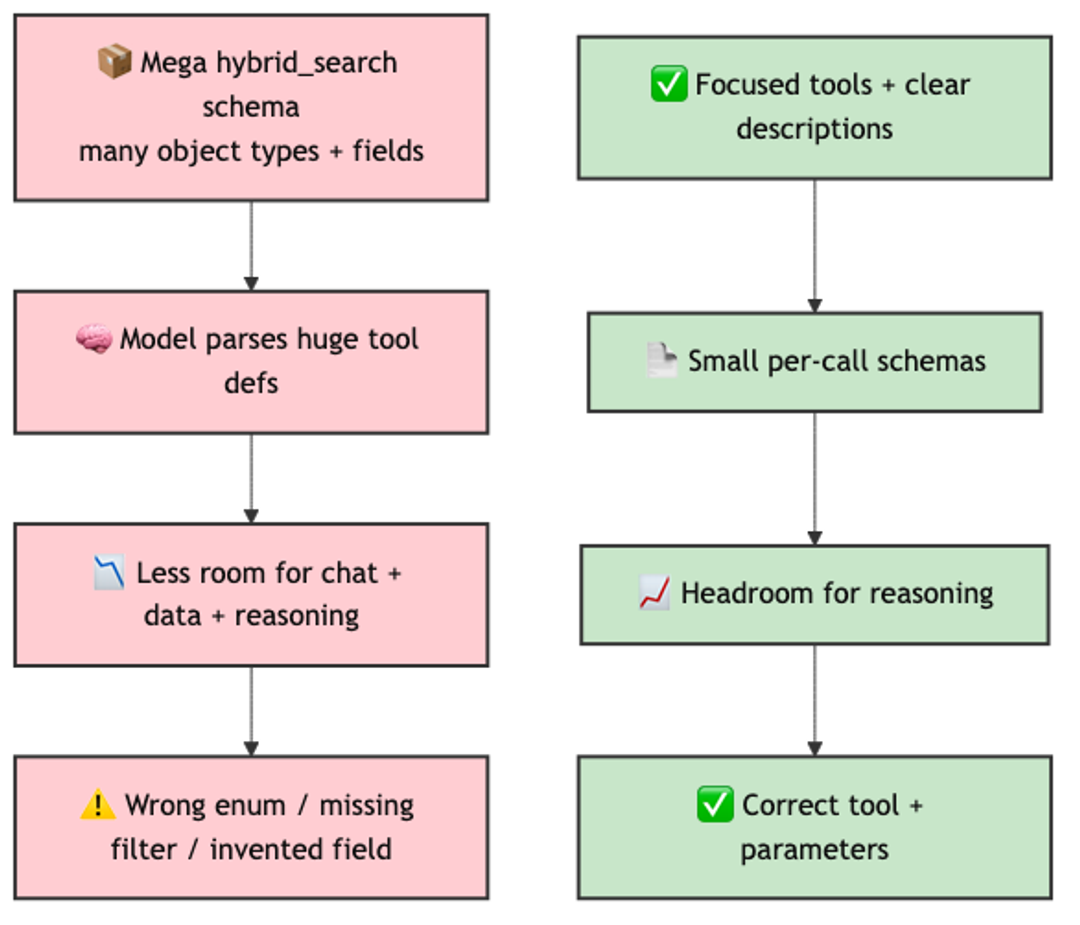

We found that tools perform better when they have fewer inputs and more information in the description. A tool with three required parameters and a rich natural-language description of what it does, when to use it, and what each parameter means will outperform a tool with fifteen optional parameters and a terse description. The LLM reads the description to decide whether to call the tool. It reads the parameter descriptions to decide how to fill them. If either is ambiguous, accuracy drops.

Focused tools per object type consistently outperform one mega-tool. A search_issues tool that accepts priority, assignee, sprint, and date range is easier for the model to use than a hybrid_search tool that accepts an object type discriminator plus a union of every field across every type. The cognitive load on the LLM mirrors the cognitive load on a human reading the same schema. If a human would struggle to fill the form correctly, the LLM will too.

We also learned that parameter descriptions carry more weight than parameter names. A parameter named q with the description "Full-text search query matched against title, description, and comment body" guides the model far better than a parameter named search_query with no description at all.

Context window saturation

There is a budget problem that most MCP implementations will hit as they scale. Every tool you expose to the LLM consumes context window space. The client sends the full schema of every available tool at the start of each conversation. If you expose thirty tools with complex schemas, you may consume 30-40% of the context window before the user even asks a question.

The problem compounds with domain objects. DevRev supports custom fields on every object type, and some customers push this hard. We had one customer with over 200 custom fields on the "contact" object alone. Every tool that touched contacts – create, update, search, list – carried the full schema for all 200+ fields in its input and output definitions. The tool schemas exploded, and the LLM's accuracy cratered because most of its context window was occupied by field definitions it would never use in a given query.

This leaves less room for the actual conversation, the retrieved data, and – critically – the model's reasoning. We observed that as tool count and schema complexity increased, response quality decreased even for queries that previously worked well. The model was not failing because the tool was wrong. It was failing because it had no room left to think.

We had to rethink the entire tool set to address this. Instead of baking every field into every tool schema, we are moving to a generic tool set for object management – create, read, update, delete, list – where the object schema is obtained on demand. The LLM first discovers what fields exist on the object it needs, then constructs the query with only the relevant fields in context. This keeps the per-tool schema small and pushes the complexity into a two-step interaction pattern that the model handles well.

The practical ceiling we found was somewhere around ten to fifteen well-designed tools before quality started to degrade. Beyond that, you need to be strategic: group related operations, trim optional parameters aggressively, and consider whether a tool truly needs to be in the MCP surface or whether it belongs behind a different interface.

Custom MCP tools via snap-ins

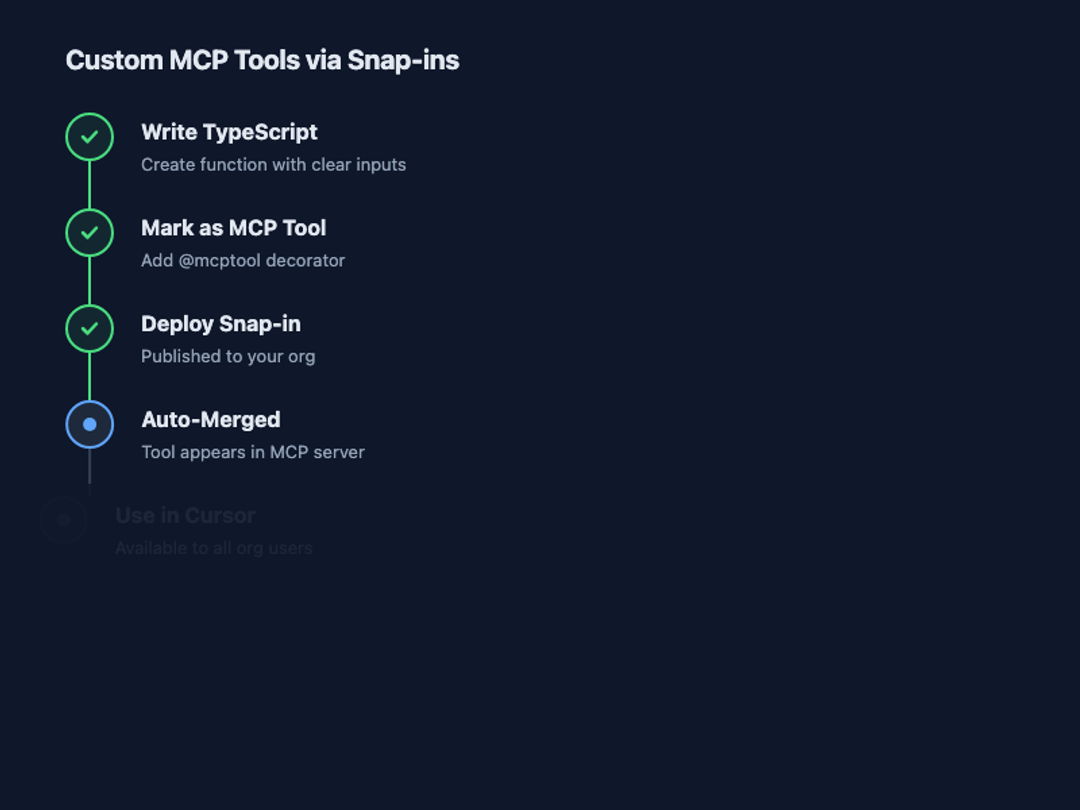

One of the more interesting patterns we shipped is letting teams extend their org's remote MCP server without touching our code. DevRev's snap-in framework lets developers write functions in TypeScript, mark them as MCP tools, and deploy to their org's server.

The function runs in our infrastructure. The tool schema gets merged into the org's MCP server automatically. When a user connects to their org's remote MCP URL, they see both DevRev's built-in tools and any custom tools their team has deployed.

This matters because every team has domain-specific queries that a general-purpose tool cannot anticipate. A security team might want a tool that cross-references vulnerabilities with open tickets. A support team might want a tool that pulls customer sentiment alongside ticket status. These tools are narrow, focused, and specific – exactly the design pattern that works best with LLMs.

The snap-in approach also enforces good tool design by accident. Developers writing a single function for a single purpose tend to create tools with clear inputs and clear descriptions. The temptation to build a mega-tool is lower when the unit of deployment is a single function.

Honest framing

MCP is not always the fastest, most accurate way of getting information. For many queries, a well-built dashboard or a direct API call will outperform an LLM-mediated tool call in both speed and reliability. The value of MCP is in flexibility – the ability to ask ad hoc questions in natural language without building a new view or writing a new query.

But that flexibility comes with a tax. There is a lot of experimentation and a lot of validation that goes into getting consistently great results through MCP – including cases where the fix is new or better-described tools, not a smarter model. We test every tool against a suite of representative queries. We measure not just whether the tool returns data, but whether the LLM calls the tool correctly across different phrasings of the same intent. We track parameter fill accuracy as a first-class metric.

The teams that will get the most out of MCP are the ones that treat tool design as a product discipline, not an afterthought. Write the descriptions like you are writing documentation for a junior developer who has never seen your API. Test with adversarial phrasings. Measure accuracy, not just availability. And be willing to split a complex tool into three simple ones even when it feels redundant.

Remote MCP drops the setup barrier to zero. That's genuinely valuable. But it shifts the hard problem from "how do I install this" to "how do I design tools that LLMs can reliably use." We are still learning, and the tooling continues to mature. The teams building MCP servers today are writing the playbook for how AI agents interact with software systems. Getting the tool design right is the highest-leverage work in that stack.

Learn more

If you're building MCP tools or want to try DevRev's remote MCP server, visit https://developer.devrev.ai/mcp for setup details and best practices.

---

Thanks to the DevRev MCP team for sharing their work openly, including the parts that do not work yet. See also the live walkthrough "DevRev Remote MCP – Real use cases for builders" with Ahmed Bashir and Luka Košenina (October 2025):

Earlier Cursor-focused MCP demos are in the session with Ahmed and Shivam:

Related Articles

Alok Mishra

Ahmed Bashir

Akanksha Deswal

Nimit Savant

Computer+ Apps

Our customers

Resources

Initiatives